IARPA’s Video LINC program could be repurposed to spy on protesters, enforce 15-minute smart city compliance: perspective

The Intelligence Advanced Research Projects Activity (IARPA) is putting together a research program for the US spy community to autonomously identify, track, and trace people and their vehicles over long distances and periods of time.

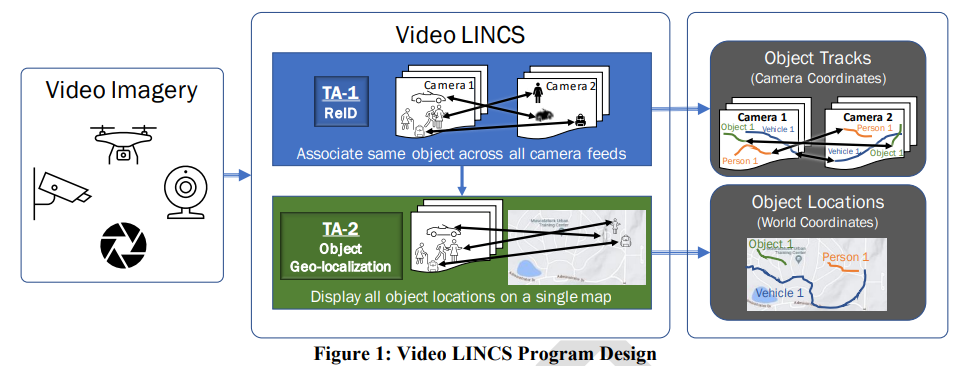

Last week, the research funding arm of the US intelligence community, IARPA, published the technical draft for its Video Linking and Intelligence from Non-Collaborative Sensors (Video LINCS) research program.

The newly-updated draft broad agency announcement and funding opportunity details how the US spy community is looking to autonomously identify, track, and trace people, vehicles, and objects by using AI to analyze video footage captured by CCTV cameras, drones, and potentially webcams and phones (as evidenced in the Video LINCS Program Design image below).

“The program will start with person reID, progress to vehicle reID, and culminate with reID of generic objects across a video collection”

IARPA Video LINCS Program

The official reasons given for developing this program have to do with responding to “tragic incidents” that require “forensic analyses,” and to “analyze patterns for anomalies and threats.”

IARPA program director Dr. Reuven Meth also mentioned in the video below that Video LINCS would be used to “facilitate smart city planning.”

But ask yourself, why would the US spy agency funding arm want to develop tools for smart city planning?

“Video LINCS will take labor-intensive workflows and automate them to facilitate forensic analyses, proactive threat detection, and smart city planning”

IARPA Program Director Dr. Reuven Meth

The Sociable previously reported on the initial Video LINCS program announcement on January 9, 2024 when there was limited information publicly available, but last week IARPA updated its technical specs, giving us a more detailed look at just how deep the US spy apparatus is willing to go in its surveillance pursuits.

The Video LINCS program consists of two Technical Areas (TAs):

- Re-identification (ReID): Autonomously and automatically associating the same object (person, vehicle, or generic object) across a video corpus.

- Object Geo-localization: Geo-locating objects to provide positions for all objects in a common world reference frame.

ReID, according to IARPA, means “the process of matching the same object across a video collection, to determine where the object appears throughout the video.”

“The goal of the Video LINCS program, is to develop re-identification (reID) algorithms to autonomously associate objects across diverse, non-collaborative, video sensor footage and map re-identified objects to a unified coordinate system (geo-localization)”

IARPA Video LINCS Program

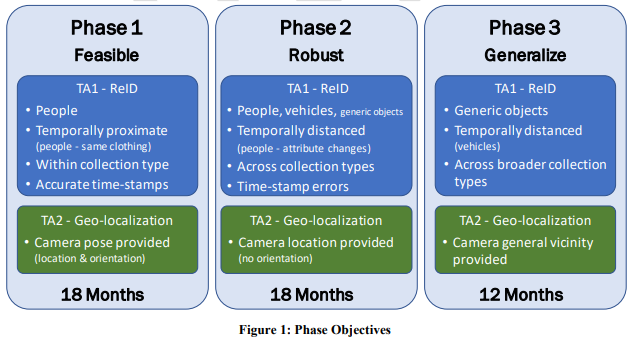

The Video LINCS program, if it does indeed become a fully-funded research program, will consist of three phases over the course of 48 months:

- During Phase 1, teams will demonstrate the feasibility of reID for people in a video corpus and object geo-localization to provide all subject motion in a common reference frame. Each person’s clothing will remain the same (short-term / temporally proximate reID) and provided metadata (e.g. time-stamps and camera pose, to the extent available) will be noise free.

- During Phase 2, reID will expand to include people with clothing changes (long-term / temporally distant reID), include vehicles, require functionality on generic objects, additional sensor types and collection geometries will be included in the evaluations, and noise will be introduced into provided metadata.

- During Phase 3, evaluation will have a stronger focus on reID of generic objects, temporally distant reID of vehicles will be performed, additional sensor types and collection geometries will be included, and there will be greater uncertainty in camera pose.

The Video LINCS program will look to re-ID people, vehicles, and objects over long distances and periods of time using a kaleidoscope of technologies, including:

- Artificial Intelligence

- Computer vision, including object detection, tracking, person/vehicle/object modeling, generic vision learning

- Deep learning

- Geometric camera projections and inverse projections

- Image and video geo-localization

- Machine learning

- Modeling and simulation

- Open set classification

- Re-identification

- Soft biometrics

- Software engineering

- Software integration

- Systems integration

- Truthed video data collection, annotation (truthing in both anonymized identities and geo-location), including potential Human Subject Research

- Vehicle fingerprinting

- Video data generation (including simulation, generative modeling)

“The system needs to automatically locate the objects and associate them – across scale, aspect, density, crowding, obscuration, etc. – without introducing false detections and false matches”

IARPA Video LINCS Program

Apart from threat detection, forensic analyses, and smart city planning, there are many other potential use cases that could come from this spy program down the road.

For example, the tools and tactics coming from Video LINCS would be able to, hypothetically, identify who was present at a rally, protest, or riot — such as the one that occurred in Washington, DC on January 6, 2021 — and follow their every move as they make their way back home, even when they change their clothes.

Another example could be for keeping track of immigrants at land, air, and sea border crossings.

And it would be invaluable to governments wishing to enforce future lockdowns or low-emission zones in 15-minute smart cities as the authorities would be able to identify who broke protocol while tracking and tracing their every move for law enforcement to hunt them down.

All of this would be done autonomously and automatically.

But hey! maybe I’m being overdramatic.

After all, the government said it was to keep people safe, and the government has never let us down in always keeping our best interests at heart.

Image by Freepik